Measuring Bias in Machine Learning: The Statistical Bias Test

DataCamp

MAY 5, 2020

This tutorial will define statistical bias in a machine learning model and demonstrate how to perform the test on synthetic data.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

DataCamp

MAY 5, 2020

This tutorial will define statistical bias in a machine learning model and demonstrate how to perform the test on synthetic data.

CIO Business Intelligence

NOVEMBER 20, 2023

Measuring developer productivity has long been a Holy Grail of business. The US Bureau of Labor Statistics has projected that the number of software developers will grow 25% from 2021-31. In addition, system, team, and individual productivity all need to be measured. And like the Holy Grail, it has been elusive.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Beyond the Basics of A/B Tests: Highly Innovative Experimentation Tactics You Need to Know

Leading the Development of Profitable and Sustainable Products

IBM Big Data Hub

MAY 17, 2024

” Given the statistics—82% of surveyed respondents in a 2023 Statista study cited managing cloud spend as a significant challenge—it’s a legitimate concern. Cloud maturity models (or CMMs) are frameworks for evaluating an organization’s cloud adoption readiness on both a macro and individual service level.

IBM Big Data Hub

APRIL 13, 2023

After developing a machine learning model, you need a place to run your model and serve predictions. If your company is in the early stage of its AI journey or has budget constraints, you may struggle to find a deployment system for your model. Also, a column in the dataset indicates if each flight had arrived on time or late.

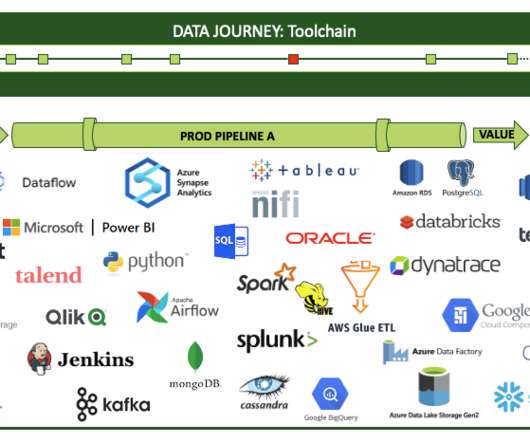

DataKitchen

MARCH 12, 2024

We kept adding tests over time; it has been several years since we’ve had any major glitches. DataKitchen helped us completely transform our operations by broadening our testing definition. Tests assess important questions, such as “Is the data correct?”

O'Reilly on Data

DECEMBER 12, 2019

Not least is the broadening realization that ML models can fail. And that’s why model debugging, the art and science of understanding and fixing problems in ML models, is so critical to the future of ML. Because all ML models make mistakes, everyone who cares about ML should also care about model debugging. [1]

DataKitchen

NOVEMBER 18, 2022

As he thinks through the various journeys that data take in his company, Jason sees that his dashboard idea would require extracting or testing for events along the way. Data and tool tests. Observability users are then able to see and measure the variance between expectations and reality during and after each run.

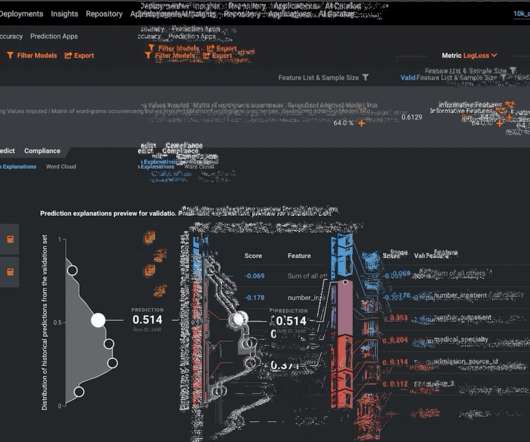

DataRobot Blog

MAY 26, 2022

Last time , we discussed the steps that a modeler must pay attention to when building out ML models to be utilized within the financial institution. In summary, to ensure that they have built a robust model, modelers must make certain that they have designed the model in a way that is backed by research and industry-adopted practices.

CIO Business Intelligence

JULY 5, 2022

Business analytics is the practical application of statistical analysis and technologies on business data to identify and anticipate trends and predict business outcomes. Data analytics is used across disciplines to find trends and solve problems using data mining , data cleansing, data transformation, data modeling, and more.

O'Reilly on Data

JULY 28, 2020

Product Managers are responsible for the successful development, testing, release, and adoption of a product, and for leading the team that implements those milestones. When a measure becomes a target, it ceases to be a good measure ( Goodhart’s Law ). You must detect when the model has become stale, and retrain it as necessary.

CIO Business Intelligence

JUNE 7, 2022

The chief aim of data analytics is to apply statistical analysis and technologies on data to find trends and solve problems. Data analytics draws from a range of disciplines — including computer programming, mathematics, and statistics — to perform analysis on data in an effort to describe, predict, and improve performance.

IBM Big Data Hub

APRIL 9, 2024

Preparing and annotating data IBM watsonx.data helps organizations put their data to work, curating and preparing data for use in AI models and applications. “For the Masters we use 290 traditional AI models to project where golf balls will land,” says Baughman. ” Watsonx.ai ” Watsonx.ai

Domino Data Lab

JULY 17, 2021

The system here will identify, via some meaningful sense, which existing speakers’ model does the utterance match. If the unknown utterance is spoken by a speaker outside the list of existing speakers, the model will nonetheless map it to some speaker from that list. The existing applications of person authentication include :-.

CIO Business Intelligence

JUNE 14, 2023

Certifications measure your knowledge and skills against industry- and vendor-specific benchmarks to prove to employers that you have the right skillset. Organization: AWS Price: US$300 How to prepare: Amazon offers free exam guides, sample questions, practice tests, and digital training.

O'Reilly on Data

MAY 18, 2020

This article answers these questions, based on our combined experience as both a lawyer and a data scientist responding to cybersecurity incidents, crafting legal frameworks to manage the risks of AI, and building sophisticated interpretable models to mitigate risk. And last is the probabilistic nature of statistics and machine learning (ML).

Domino Data Lab

OCTOBER 7, 2020

The Curse of Dimensionality , or Large P, Small N, ((P >> N)) , problem applies to the latter case of lots of variables measured on a relatively few number of samples. Statistical methods for analyzing this two-dimensional data exist. This statistical test is correct because the data are (presumably) bivariate normal.

IBM Big Data Hub

NOVEMBER 29, 2023

With the emergence of new advances and applications in machine learning models and artificial intelligence, including generative AI, generative adversarial networks, computer vision and transformers, many businesses are seeking to address their most pressing real-world data challenges using both types of synthetic data: structured and unstructured.

Smarten

JUNE 29, 2018

This article discusses the Paired Sample T Test method of hypothesis testing and analysis. What is the Paired Sample T Test? The Paired Sample T Test is used to determine whether the mean of a dependent variable e.g., weight, anxiety level, salary, reaction time, etc., is the same in two related groups.

IBM Big Data Hub

AUGUST 9, 2023

A phishing simulation is a cybersecurity exercise that tests an organization’s ability to recognize and respond to a phishing attack. Why phishing simulations are important Recent statistics show phishing threats continue to rise. The only difference is that recipients who take the bait (e.g., million phishing sites.

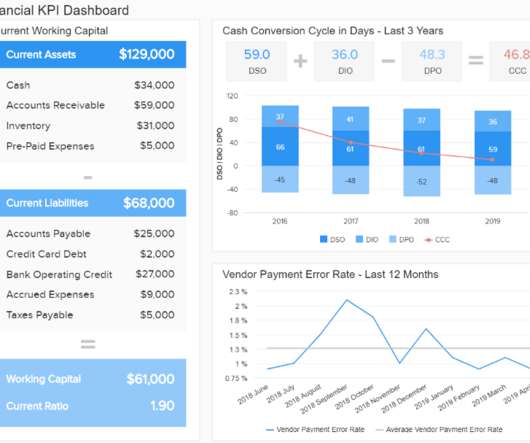

datapine

JANUARY 6, 2022

Yet, before any serious data interpretation inquiry can begin, it should be understood that visual presentations of data findings are irrelevant unless a sound decision is made regarding scales of measurement. Interval: a measurement scale where data is grouped into categories with orderly and equal distances between the categories.

datapine

JANUARY 24, 2021

Additionally, incorporating a decision support system software can save a lot of company’s time – combining information from raw data, documents, personal knowledge, and business models will provide a solid foundation for solving business problems. There are basically 4 types of scales: *Statistics Level Measurement Table*.

Smarten

JUNE 29, 2018

This article focuses on the Independent Samples T Test technique of Hypothesis testing. What is the Independent Samples T Test Method of Hypothesis Testing? Let’s look at a sample of the Independent t-test on two variables. One is a dimension containing two values and the other is a measure.

CIO Business Intelligence

JULY 24, 2023

We started by giving this data to the technical staff of the clubs, but we decided it was the moment to offer these advanced statistics to the fans and the media,” Bruno says. “We It has also developed predictive models to detect trends, make predictions, and simulate results. We followed the design thinking process,” says Bruno. “We

Occam's Razor

SEPTEMBER 19, 2011

How do you get over the frustration of having done attribution modeling and realizing that it is not even remotely the solution to your challenge of using multiple media channels? You need people with deep skills in Scientific Method , Design of Experiments , and Statistical Analysis. The nice thing is that you can also test that!

The Unofficial Google Data Science Blog

JANUARY 16, 2018

We present data from Google Cloud Platform (GCP) as an example of how we use A/B testing when users are connected. Experimentation on networks A/B testing is a standard method of measuring the effect of changes by randomizing samples into different treatment groups. This could create confusion.

Ontotext

FEBRUARY 14, 2024

Ivory tower modeling We’ve seen too many models developed by isolated ontologists that don’t survive the first battle with the data. There’s a famous saying by a statistician, George Box, “All models are wrong, but some are useful.” ” So, how do you know whether your model is useful?

Smarten

JUNE 17, 2022

After completion of the testing procedure, the certificate is provided to show that all requirements were met. As a certified CERT-IN service and product provider, Smarten adds additional security assurances to its already rich foundation of security measures and methodologies to support clients, partners and stakeholders.

Smart Data Collective

OCTOBER 8, 2023

The Power of Data Analytics: An Overview Data analytics, in its simplest form, is the process of inspecting, cleansing, transforming, and modeling data to unearth useful information, draw conclusions, and support decision-making. It is an interdisciplinary field, combining computer science, statistics , mathematics, and business intelligence.

Cloudera

DECEMBER 3, 2021

This involves identifying, quantifying and being able to measure ethical considerations while balancing these with performance objectives. For example, training an interview screening model using education data often contains gender information. As discussed in this article , model design can also be a source of bias too.

datapine

SEPTEMBER 29, 2022

5) How Do You Measure Data Quality? In this article, we will detail everything which is at stake when we talk about DQM: why it is essential, how to measure data quality, the pillars of good quality management, and some data quality control techniques. These needs are then quantified into data models for acquisition and delivery.

Smart Data Collective

MARCH 28, 2022

It can be further classified as statistical and predictive modeling, but the two are closely associated with each other. They can be again classified as random testing and optimization. This includes studying factors like test scores, teacher performances, and graduation rates.

Smarten

MAY 29, 2023

World-renowned technology analysis firm Gartner defines the role this way, ‘A citizen data scientist is a person who creates or generates models that leverage predictive or prescriptive analytics, but whose primary job function is outside of the field of statistics and analytics. ‘If Automatic generation of models.

Smarten

APRIL 12, 2023

About Smarten The Smarten approach to business intelligence and business analytics focuses on the business user and provides Advanced Data Discovery so users can perform early prototyping and test hypotheses without the skills of a data scientist.

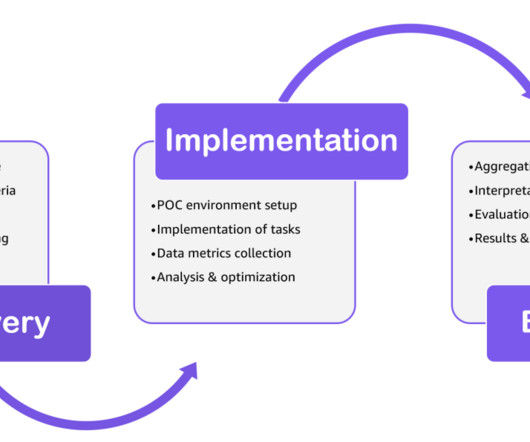

AWS Big Data

MARCH 27, 2024

By testing the solution against key metrics, a POC provides insights that allow you to make an informed decision on the suitability of the technology for the intended use case. Complete the implementation tasks such as data ingestion and performance testing. Collect data metrics and statistics on the completed tasks.

O'Reilly on Data

MARCH 31, 2020

All you need to know for now is that machine learning uses statistical techniques to give computer systems the ability to “learn” by being trained on existing data. This has serious implications for software testing, versioning, deployment, and other core development processes. Machine learning adds uncertainty.

Insight

MARCH 12, 2020

The AIgent was built with BERT, Google’s state-of-the-art language model. In this article, I will discuss the construction of the AIgent, from data collection to model assembly. Features: DistilBERT Text Embeddings Once I had a raw dataset, I could begin engineering features and building a natural language processing (NLP) model.

CIO Business Intelligence

AUGUST 24, 2023

And with large language models (LLM), data governance is in its infancy. Retraining, deployment, operations, testing—a lot of these features just aren’t available yet.” That can include learning how to verify that correct controls are in place, models are isolated, and they’re appropriately used, he says. AI is a black box.

CIO Business Intelligence

APRIL 14, 2022

DaaS offerings have been evolving for decades, but lately developers have recognized that a cloud model, with its flexible, usage-based pricing, could more readily help connect enterprises with data sources the vendors seek to monetize. Perhaps your algorithm needs testing a street full of drunk pedestrians at Mardi Gras? Synthesis AI.

CIO Business Intelligence

FEBRUARY 13, 2023

The name references the Greek letter sigma, which is a statistical symbol that represents a standard deviation. Larger is Better involves a “lower specification limit,” such as test scores — where the target is 100%. At this stage, it’s important to establish performance baselines, future goals, and how performance will be measured.

IBM Big Data Hub

APRIL 18, 2024

While these large language model (LLM) technologies might seem like it sometimes, it’s important to understand that they are not the thinking machines promised by science fiction. LLMs like ChatGPT are trained on massive amounts of text data, allowing them to recognize patterns and statistical relationships within language.

BizAcuity

APRIL 1, 2023

Widely used to discover trends, patterns, check assumptions and spot anomalies or outliers, EDA involves a variety of techniques including statistical analysis, and machine learning to gain a better understanding of data. Building a predictive model is a continuous process and commitment.

DataRobot

APRIL 20, 2021

In this installment, I’ll cover four key elements of trusted AI that relate to the performance of a model: data quality, accuracy, robustness and stability, and speed. The performance of any machine learning model is tightly linked to the data it was trained on and validated against. Quality Input Means Quality Output.

BizAcuity

JANUARY 18, 2023

Widely used to discover trends, patterns, check assumptions and spot anomalies or outliers, EDA involves a variety of techniques including statistical analysis, and machine learning to gain a better understanding of data. Predictive modeling for flagging suspicious activity. Steps to building a highly accurate predictive model for AML.

Domino Data Lab

OCTOBER 2, 2019

The case that might be familiar to you is an AB test. You can make a change to a product and test it against the original version of the product. The control group experiences the original algorithm, and the test group experiences the new version. You can use a regression model. Let’s continue with this example.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content