Use Amazon Athena with Spark SQL for your open-source transactional table formats

AWS Big Data

JANUARY 24, 2024

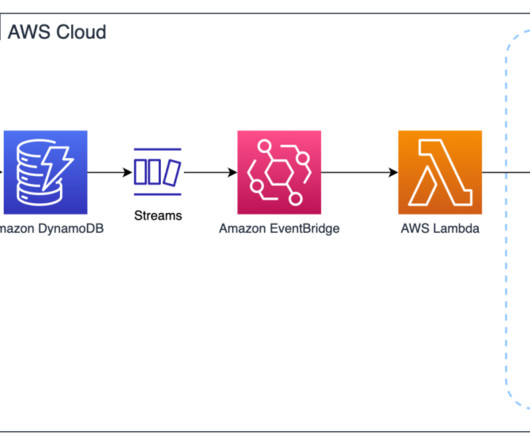

These formats enable ACID (atomicity, consistency, isolation, durability) transactions, upserts, and deletes, and advanced features such as time travel and snapshots that were previously only available in data warehouses. It will never remove files that are still required by a non-expired snapshot.

Let's personalize your content