Optimize data layout by bucketing with Amazon Athena and AWS Glue to accelerate downstream queries

AWS Big Data

APRIL 25, 2024

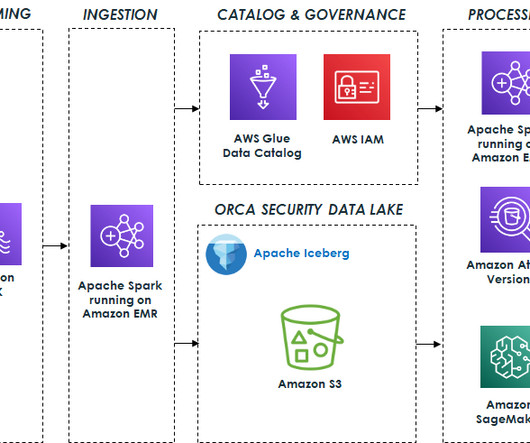

In the era of data, organizations are increasingly using data lakes to store and analyze vast amounts of structured and unstructured data. Data lakes provide a centralized repository for data from various sources, enabling organizations to unlock valuable insights and drive data-driven decision-making.

Let's personalize your content