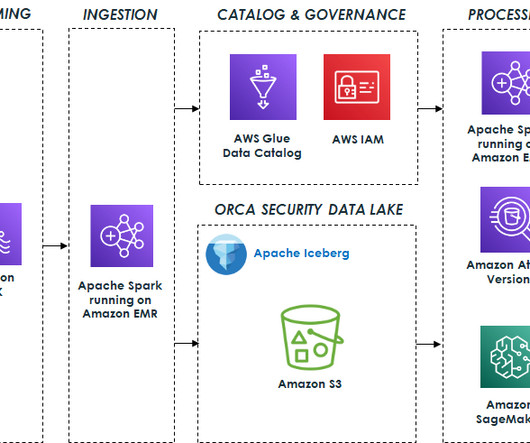

Orca Security’s journey to a petabyte-scale data lake with Apache Iceberg and AWS Analytics

AWS Big Data

JULY 20, 2023

One key component that plays a central role in modern data architectures is the data lake, which allows organizations to store and analyze large amounts of data in a cost-effective manner and run advanced analytics and machine learning (ML) at scale. Why did Orca build a data lake?

Let's personalize your content