How to use foundation models and trusted governance to manage AI workflow risk

IBM Big Data Hub

OCTOBER 16, 2023

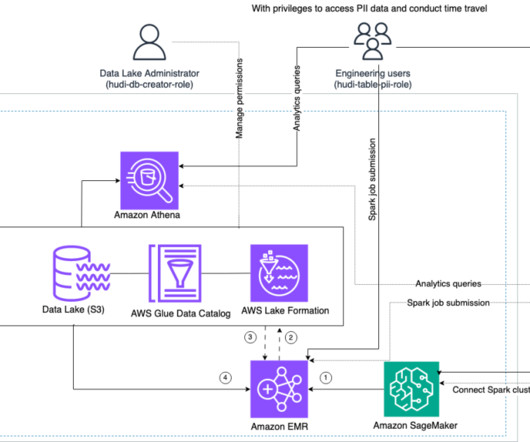

It includes processes that trace and document the origin of data, models and associated metadata and pipelines for audits. How to scale AL and ML with built-in governance A fit-for-purpose data store built on an open lakehouse architecture allows you to scale AI and ML while providing built-in governance tools.

Let's personalize your content