Exploring real-time streaming for generative AI Applications

AWS Big Data

MARCH 25, 2024

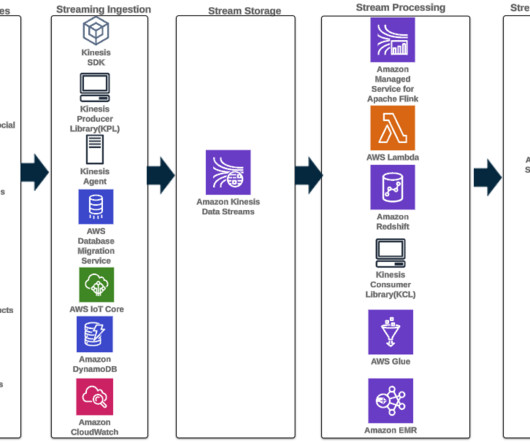

Furthermore, data events are filtered, enriched, and transformed to a consumable format using a stream processor. The result is made available to the application by querying the latest snapshot. For more details, refer to Create a low-latency source-to-data lake pipeline using Amazon MSK Connect, Apache Flink, and Apache Hudi.

Let's personalize your content