Use Apache Iceberg in a data lake to support incremental data processing

AWS Big Data

MARCH 2, 2023

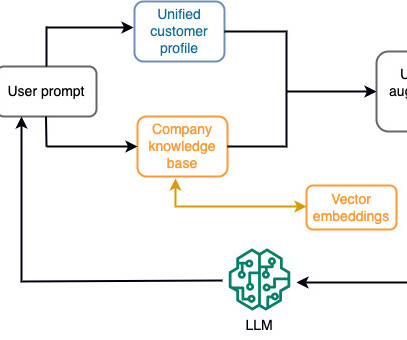

Iceberg has become very popular for its support for ACID transactions in data lakes and features like schema and partition evolution, time travel, and rollback. Apache Iceberg integration is supported by AWS analytics services including Amazon EMR , Amazon Athena , and AWS Glue. The snapshot points to the manifest list.

Let's personalize your content