5 Hardware Accelerators Every Data Scientist Should Leverage

Smart Data Collective

APRIL 5, 2022

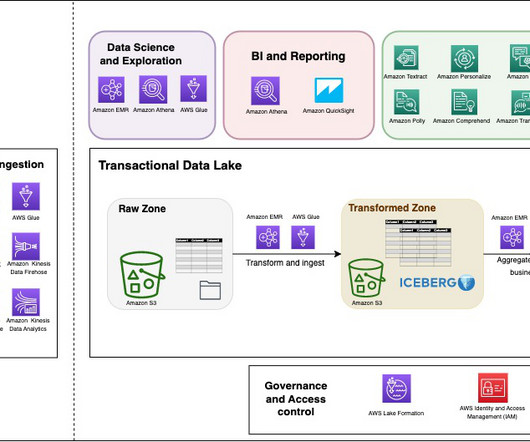

Companies and individuals with the computing power that data scientists might need are able to sell it in exchange for cryptocurrencies. There are a lot of powerful benefits of offering an incentive-based approach as hardware accelerators. A text analytics interface that helps derive actionable insights from unstructured data sets.

Let's personalize your content