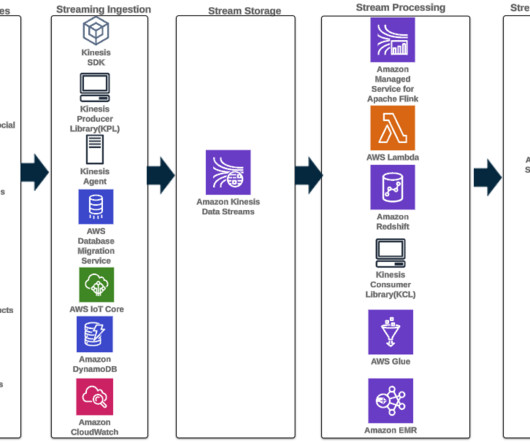

Exploring real-time streaming for generative AI Applications

AWS Big Data

MARCH 25, 2024

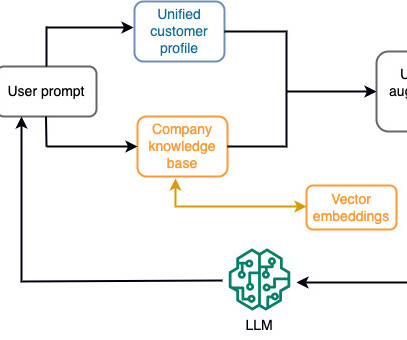

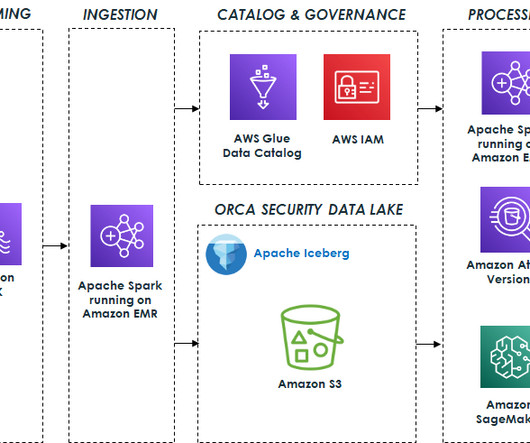

Stream processing, however, can enable the chatbot to access real-time data and adapt to changes in availability and price, providing the best guidance to the customer and enhancing the customer experience. When the model finds an anomaly or abnormal metric value, it should immediately produce an alert and notify the operator.

Let's personalize your content