Straumann Group is transforming dentistry with data, AI

CIO Business Intelligence

FEBRUARY 16, 2023

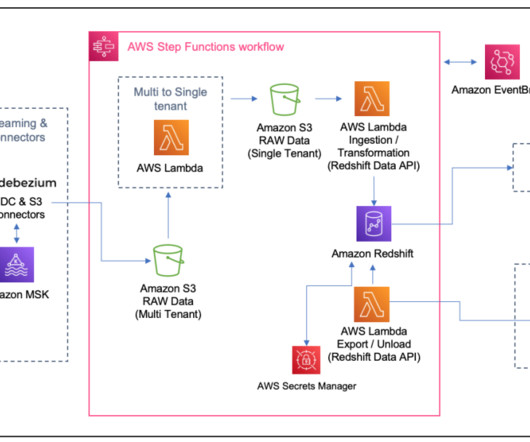

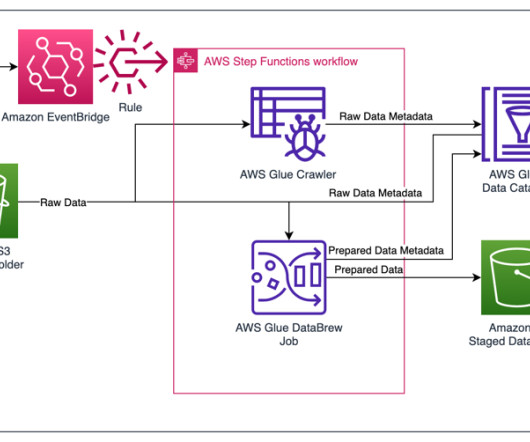

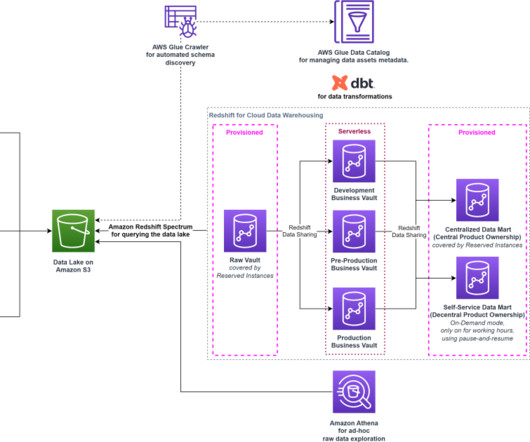

“Digitizing was our first stake at the table in our data journey,” he says. That step, primarily undertaken by developers and data architects, established data governance and data integration. That step, primarily undertaken by developers and data architects, established data governance and data integration.

Let's personalize your content