Salesforce debuts Zero Copy Partner Network to ease data integration

CIO Business Intelligence

APRIL 25, 2024

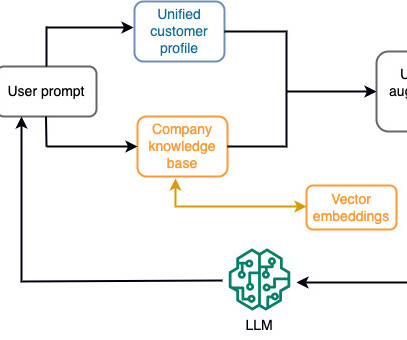

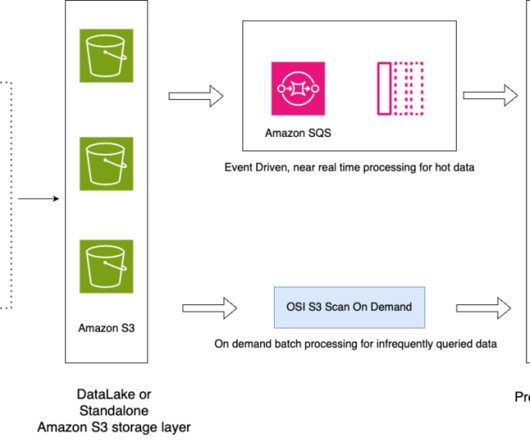

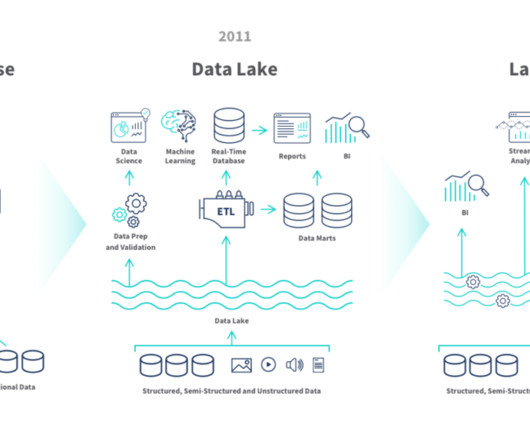

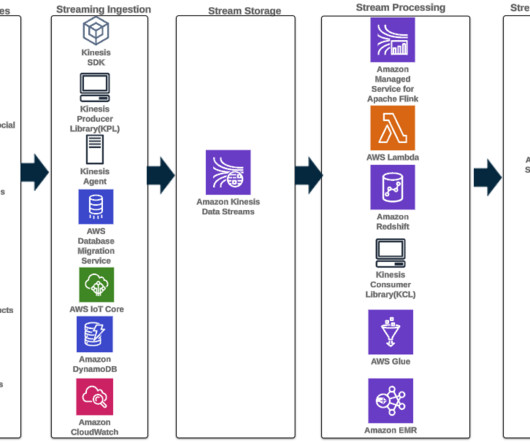

Currently, a handful of startups offer “reverse” extract, transform, and load (ETL), in which they copy data from a customer’s data warehouse or data platform back into systems of engagement where business users do their work. Sharing Customer 360 insights back without data replication.

Let's personalize your content