Building A RAG Pipeline for Semi-structured Data with Langchain

Analytics Vidhya

DECEMBER 1, 2023

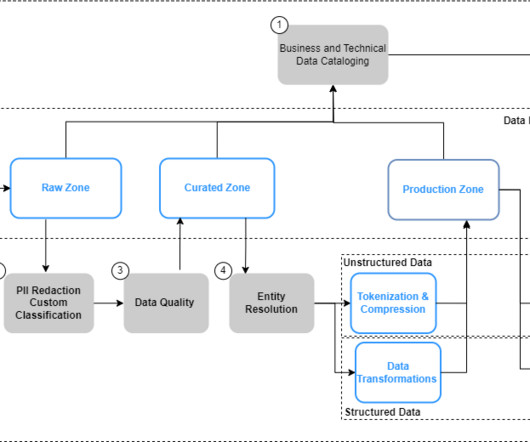

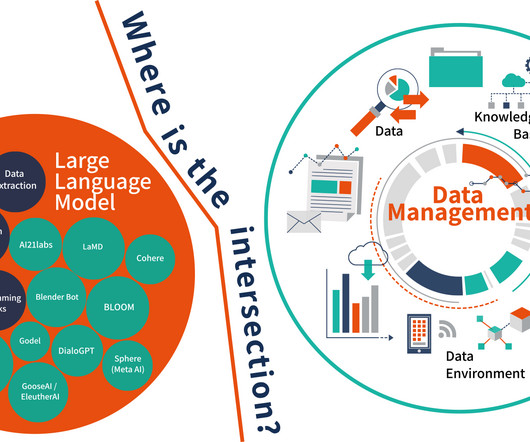

Many tools and applications are being built around this concept, like vector stores, retrieval frameworks, and LLMs, making it convenient to work with custom documents, especially Semi-structured Data with Langchain. Working with long, dense texts has never been so easy and fun.

Let's personalize your content