Apache Ozone Metadata Explained

Cloudera

JUNE 2, 2021

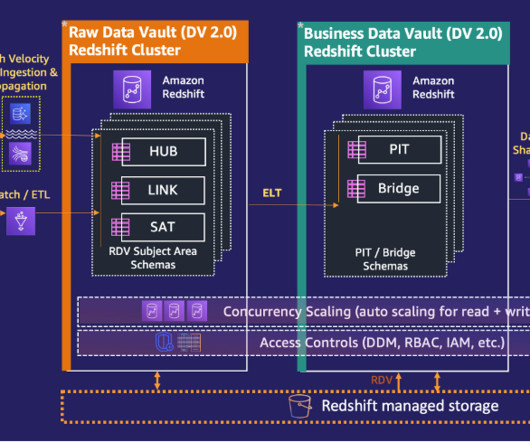

As an important part of achieving better scalability, Ozone separates the metadata management among different services: . Ozone Manager (OM) service manages the metadata of the namespace such as volume, bucket and keys. Datanode service manages the metadata of blocks, containers and pipelines running on the datanode. .

Let's personalize your content