Data architecture strategy for data quality

IBM Big Data Hub

JANUARY 5, 2023

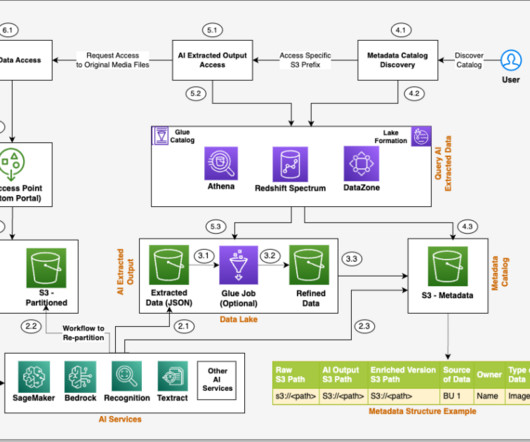

Several factors determine the quality of your enterprise data like accuracy, completeness, consistency, to name a few. But there’s another factor of data quality that doesn’t get the recognition it deserves: your data architecture. How the right data architecture improves data quality.

Let's personalize your content