Modernize your ETL platform with AWS Glue Studio: A case study from BMS

AWS Big Data

DECEMBER 13, 2023

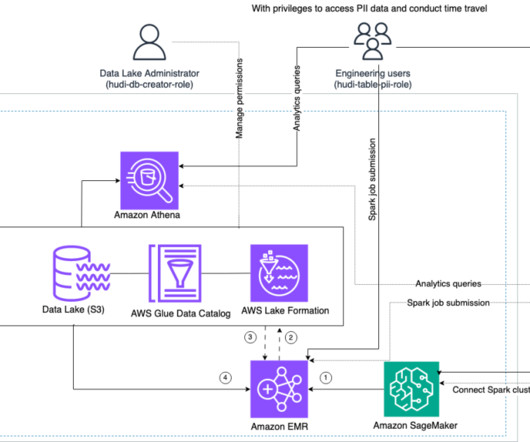

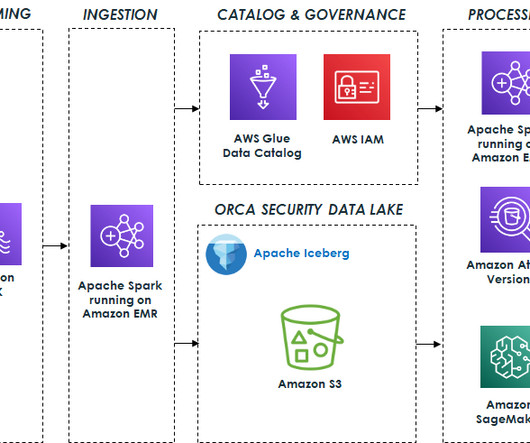

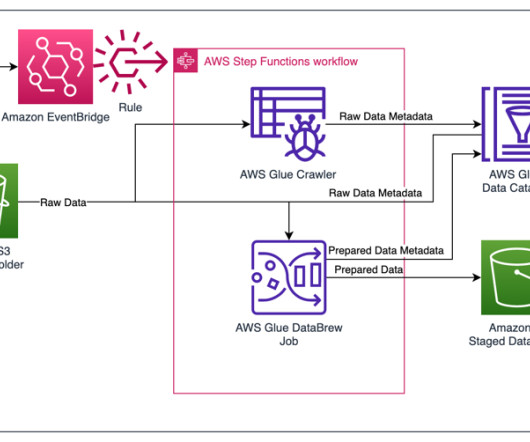

In addition to using native managed AWS services that BMS didn’t need to worry about upgrading, BMS was looking to offer an ETL service to non-technical business users that could visually compose data transformation workflows and seamlessly run them on the AWS Glue Apache Spark-based serverless data integration engine.

Let's personalize your content